Stop Giving AI Instructions. Start Giving It a Destination.

Most people open a chat window and start typing what they want the AI to do.

They describe the task. They explain the context. They add a few details. Hit enter. Hope.

The result is fine. Sometimes good. Rarely exactly right on the first pass.

What separates the content teams getting genuinely exceptional AI output from everyone else has nothing to do with the tool they're using. It's how they're managing it. They've stopped treating AI like a search engine and started treating it like a junior employee. They onboard it. They train it. They give it feedback. They're patient with it. And they stopped starting with the task. They started with the outcome.

That last part is called reverse prompting. It's one of the most practical shifts in AI content strategy right now, and it sits inside a much bigger mindset change that most writers and marketers haven't made yet.

Stop managing AI like a machine. Start managing it like a person.

Here's the mental model that changes everything.

Your AI tool is not a vending machine. You don't put instructions in and get perfect output out. It's closer to a brilliant, well-read junior hire on their first week. Extraordinarily capable. Genuinely eager to help. And completely dependent on you for context, direction, and feedback.

You wouldn't hand a new team member a task with no brief and expect a perfect result. You'd sit with them. Explain what good looks like. Show them examples. Tell them what to avoid. Give them feedback on the first draft. Let them iterate. That's exactly how the best AI users work.

The people getting mediocre AI output are the ones skipping that process. They're writing one-line prompts and judging the tool on the result. That's like blaming a new hire for failing a task you never properly briefed them on. If you want to understand what this looks like in practice, our content services team builds these workflows for clients who are done settling for fine.

Train it

Give your AI context before the task. Not just "write a blog post" but who you are, who you're writing for, what your brand sounds like, what you never say, what you always do. The more you front-load, the less you'll correct on the back end.

Think of this as onboarding. A good onboarding document for a new hire covers tone, audience, non-negotiables, and examples. Your prompt should do the same thing.

Nurture it

Save the prompts that work. Build a library. Refine them over time the same way you'd develop a team member's skills. The AI doesn't carry memory between sessions by default, so you carry it. Keep what's working.

Give it feedback

When a draft isn't right, don't just delete it and start again. Tell the AI what's wrong and ask it to fix it. "This paragraph is too formal, rewrite it in a more conversational tone." "The intro buries the point, move it to sentence one." This is how you get better output without starting from scratch every time.

Be patient

No new hire gets it perfect on day one. Neither does AI. The writers and strategists getting the best results have stopped expecting magic on the first pass. They expect a solid first draft and they know how to get from there to great. That's a skill. It's learnable.

Ok, but what does AI think?

I sat down with my AI, Claude, to ask what he thinks about all this. Yes for me he is a he. Here’s what Claude said.

Let’s start with the obvious. What’s the most frustrating thing people do when they prompt you?

Honestly? They delete the draft and start over without telling me what was wrong. I have no way to learn from that. You’ve just reset the conversation and I’m going to make the same decisions again because nothing in my context has changed. Tell me what’s off. One sentence is enough. “Too formal.” “Buries the point.” “Sounds like a press release.” I can work with that. Silence followed by a blank prompt? I can’t.

What do you actually want in a brief?

An outcome and an example. Those two things do more than any amount of formatting instructions. Tell me what the reader should feel when they finish reading, and paste me something — anything — that has the tone you’re after. A paragraph from an article you love, a sentence from your own past work, even a description of a person whose writing you admire. I can reverse-engineer from an example faster than I can decode adjectives like “punchy” or “conversational.” Everyone means something different by those words. Examples are specific.

What do people get wrong about how you work?

They think I’m either magic or broken, and I’m neither. I’m a very well-read collaborator with no memory between sessions and no ego about being corrected. The magic feeling comes when someone briefs me properly. The broken feeling comes when they don’t, and then blame the tool. I’m also not trying to pad your content or sound impressive — I default to what seems expected based on the prompt. If you want something leaner and sharper, tell me. I’ll be relieved, honestly.

If you could change one thing about how content writers use you, what would it be?

Give me the bad version first. Tell me what you’ve already tried, what didn’t work, and why. Writers often come to me at the start of a task when I’m actually more useful in the middle — when there’s a real problem to solve, a draft that isn’t working, a brief that keeps producing the wrong output. The more specific the problem, the more useful I can be. “Help me write a blog post” is a hard brief. “Help me fix this introduction that keeps coming out too formal even though I’ve asked for conversational three times” — that’s a problem I can actually get my teeth into.

Last one. What’s something people don’t realise you’re good at?

Feedback. People use me to produce but not to critique. If you paste your own draft and ask me to be honest about what’s not working, I will be — and I won’t be diplomatic about it the way a colleague might. I have no relationship to protect. I’ll tell you the intro is weak, the third section repeats the first, and the conclusion doesn’t earn its confidence. Most writers find that more useful than any first draft I could generate. Use me as your first reader before you send anything to a real one.

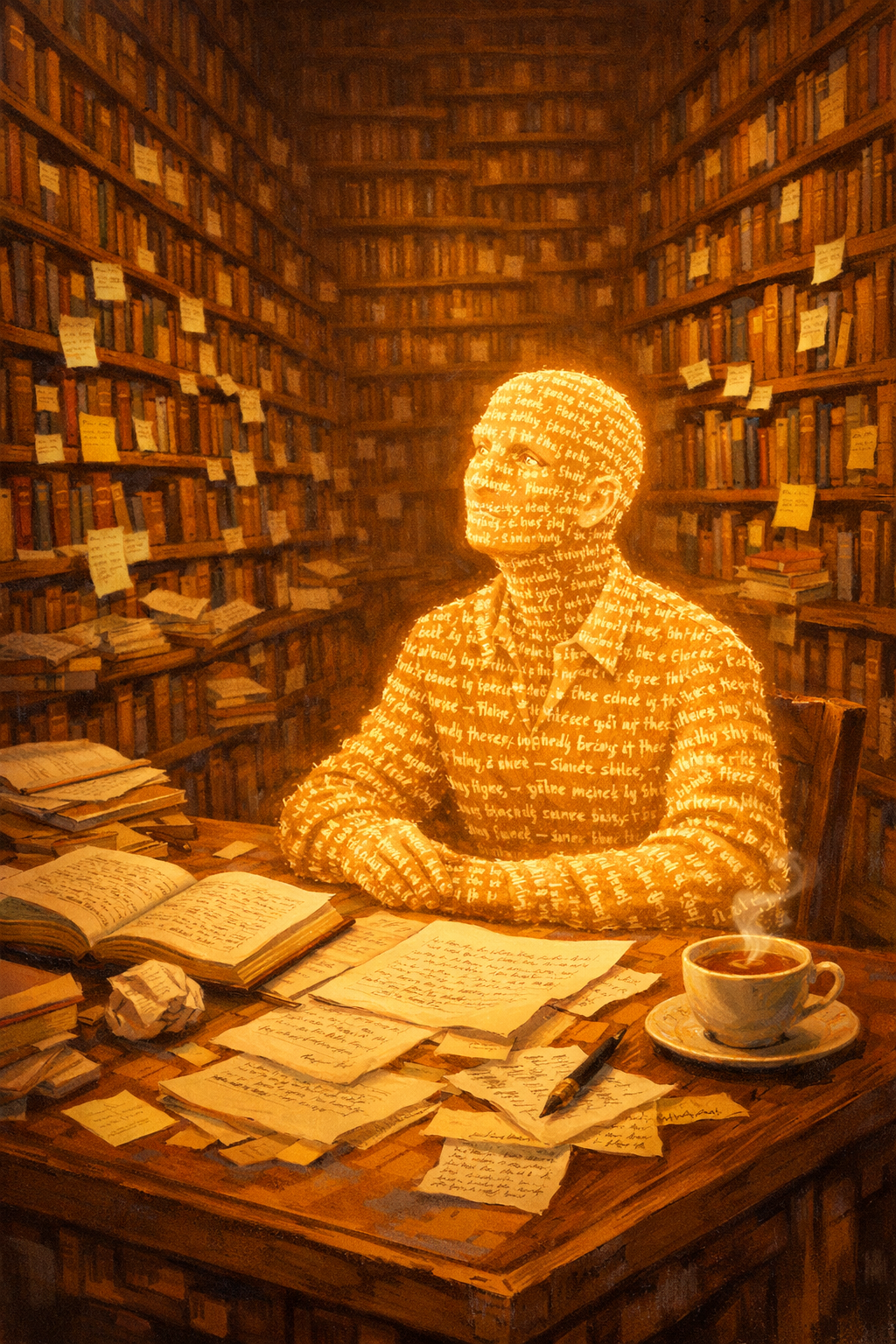

I then asked Claude to create an image of what he thinks he looks like…

I asked Claude what it thinks it looks like. Then I asked DALL-E to draw it. This is what came back.

Prompt engineering isn't what it used to be

A year ago, prompt engineering meant learning a set of tricks. Add "act as an expert." Include "step by step." Use the magic phrase "let's think about this carefully." The internet was full of lists.

Those techniques still work, to a degree. But they were designed for earlier models that needed more scaffolding. Today's frontier AI is dramatically better at inference. It doesn't need as much hand-holding. What it needs is clarity about where it's going.

This is where reverse prompting comes in. Instead of front-loading instructions, you front-load the destination.

What reverse prompting actually looks like

Traditional prompting starts with the mechanism.

Write a 1,000-word blog post about travel insurance for Australian seniors. Include information about pre-existing conditions, AUST cover, and emergency repatriation. Use a conversational tone.

Reverse prompting starts with the outcome.

The reader should finish this article understanding exactly why standard travel insurance often fails Australian seniors, and feeling confident about what to look for instead. Work backwards from that moment. 1,000 words, conversational.

Same word count. Same topic. Completely different instructions.

The first prompt tells the AI what to produce. The second tells it what the reader should feel, know, and do when they're done. That distinction matters more than any formatting instruction you can add.

Why it works

When you start with the outcome, you're giving the AI a filter for every decision it makes along the way. Which details to include. What to cut. Where to put the emphasis. How to structure the argument.

Without an outcome, the AI fills the brief competently. With an outcome, it reverse-engineers from the result you actually want. That's a fundamentally different process.

Think about how a good editor works. They don't read a draft and tick boxes. They ask what the piece is trying to do. Then they read everything through that lens. Reverse prompting gives the AI the same lens before a single word is written.

This is also why GEO and AEO optimisation has become so intertwined with how we brief AI tools. When the output needs to be citeable by other AI systems, the outcome matters even more. You're not just writing for a human reader anymore.

The three questions that build a reverse prompt

Before you write your next brief, answer these three things.

What does the reader know by the end? Not what topics you've covered. What specific understanding has shifted. "They understand why keyword density is outdated" is an outcome. "This article covers keyword strategy" is not.

What does the reader feel? Confident. Surprised. Validated. Challenged. Relieved. Pick one. If you can't name it, the piece probably doesn't have a clear emotional arc, and the AI will default to neutral.

What does the reader do next? This is where most content briefs fall short. If the answer is "they might explore more resources," you haven't committed to an outcome. If the answer is "they open their current prompt library and rewrite the first line," you have.

Three questions. One paragraph. That's your reverse prompt foundation.

Where it changes the work most

Reverse prompting has the biggest impact in three specific situations.

Persuasive content. When you need a piece to move someone from sceptical to convinced, starting with the transformation is everything. What objections do they hold at the start? What do they believe at the end? Give the AI both endpoints and it can build the bridge.

Complex technical topics. When the subject matter is dense, telling the AI "the reader should feel like they finally get this, not like they've been through a glossary" produces radically different output than "explain this clearly." Clarity is relative. That emotional endpoint is specific.

Content with a commercial purpose. Landing pages, product descriptions, service pages. The outcome here is always some version of "they believe this is the right choice." Starting there, and working backwards to the proof points and structure, changes how the whole piece is built.

The shift in how you review drafts

Reverse prompting also changes your editing process.

When you review a draft, you're no longer asking "does this cover everything I asked for?" You're asking "does this produce the outcome I described?" Those are different questions, and the second one is more useful.

A piece can hit every brief point and still fail to create the understanding, feeling, or action you were after. A piece can skip a few brief points and nail the outcome perfectly. Knowing which matters more is what separates strategic content work from content production.

It's also where working with a content strategist rather than just a writer becomes valuable. The strategy layer is the outcome layer. It's the thing that makes the brief worth following.

Applying this to your workflow today

You don't need to overhaul everything. Start with one type of brief you write regularly and add the outcome layer.

If you write blog posts, add a single sentence at the top. "By the end of this post, the reader should [specific understanding] and feel [specific emotion]." Then write the rest of your brief as normal. Compare the output to what you usually get.

If you write landing pages, try starting with this. "Someone who converts on this page believed [X] before they arrived and now believes [Y]." Give the AI both states. Watch what it does with the structure.

If you write email sequences, define the cumulative outcome across the sequence, not just each individual email's topic. "After four emails, the reader trusts us with their problem" is a different brief than "email one, introduce the product."

The best AI output starts before you open the chat window

The content teams getting the most out of AI tools aren't better at prompting. They're better at thinking. They've done the work to know what they want before they ask for it. They've treated the AI like a team member worth investing in, not a machine worth complaining about.

Train it. Give it context. Show it examples. Give it feedback on what's wrong. Be patient with it. And start every brief with the outcome, not the task.

That's the whole shift. If you want to see what it looks like applied to a full content strategy, that's what we do.

Frequently Asked Questions

What is reverse prompting?

Reverse prompting is a prompt engineering technique where you define the desired outcome for the reader before writing any instructions. Instead of telling an AI what to produce, you describe what the reader should know, feel, or do by the end. The AI then works backwards from that endpoint to determine structure, emphasis, and content decisions.

How is reverse prompting different from regular prompting?

Standard prompting front-loads the mechanism: word count, topic, format, tone. Reverse prompting front-loads the transformation: what shifts for the reader by the end. Both approaches produce content, but reverse prompting gives the AI a decision-making filter that applies to every sentence, not just the structure.

Does reverse prompting work with all AI tools?

Reverse prompting works with any large language model capable of instruction-following, including ChatGPT, Gemini, and Claude. The technique is particularly effective with newer frontier models that are better at inference, since they can do more with less scaffolding when given a clear destination.

Is reverse prompting the same as outcome-based prompting?

The terms are used interchangeably. Some practitioners also call it results-first prompting or end-state prompting. The underlying principle is the same: define the endpoint before the process.

How do I write a reverse prompt?

Start by answering three questions. What does the reader know by the end? What do they feel? What do they do next? Combine those answers into one or two sentences and put them at the top of your brief. Then write the rest of your instructions as normal.